Google DeepMind is now able to train tiny, off-the-shelf robots to square off on the soccer field. In a new paper published today in Science Robotics, researchers detail their recent efforts to adapt a machine learning subset known as deep reinforcement learning (deep RL) to teach bipedal bots a simplified version of the sport. The team notes that while similar experiments created extremely agile quadrupedal robots (see: Boston Dynamics Spot) in the past, much less work has been conducted for two-legged, humanoid machines. But new footage of the bots dribbling, defending, and shooting goals shows off just how good a coach deep reinforcement learning could be for humanoid machines.

While ultimately meant for massive tasks like climate forecasting and materials engineering, Google DeepMind can also absolutely obliterate human competitors in games like chess, go, and even Starcraft II. But all those strategic maneuvers don’t require complex physical movement and coordination. So while DeepMind can study simulated soccer movements, it hasn’t been able to translate to a physical playing field—but that’s quickly changing.

To make the miniature Messi’s, engineers first developed and trained two deep RL skill sets in computer simulations—the ability to get up from the ground and how to score goals against an untrained opponent. From there, they virtually trained their system to play a full one-on-one soccer matchup by combining these skill sets, then randomly pairing them against partially trained copies of themselves.

[Related: Google DeepMind’s AI forecasting is outperforming the ‘gold standard’ model.]

“Thus, in the second stage, the agent learned to combine previously learned skills, refine them to the full soccer task, and predict and anticipate the opponent’s behavior,” researchers wrote in their paper introduction, later noting that, “During play, the agents transitioned between all of these behaviors fluidly.”

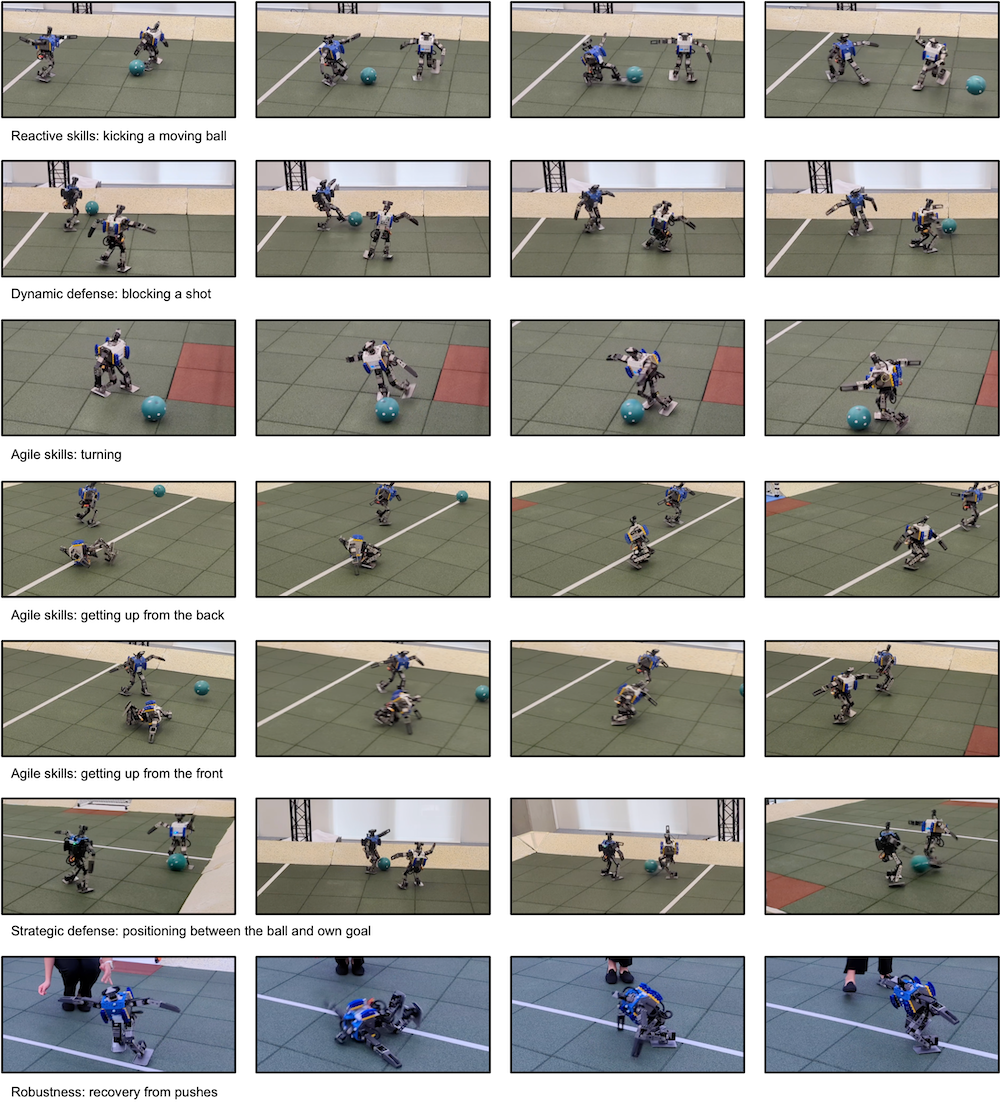

Thanks to the deep RL framework, DeepMind-powered agents soon learned to improve on existing abilities, including how to kick and shoot the soccer ball, block shots, and even defend their own goal against an attacking opponent by using its body as a shield.

During a series of one-on-one matches using robots utilizing the deep RL training, the two mechanical athletes walked, turned, kicked, and uprighted themselves faster than if engineers simply supplied them a scripted baseline of skills. These weren’t miniscule improvements, either—compared to a non-adaptable scripted baseline, the robots walked 181 percent faster, turned 302 percent faster, kicked 34 percent faster, and took 63 percent less time to get up after falling. What’s more, the deep RL-trained robots also showed new, emergent behaviors like pivoting on their feet and spinning. Such actions would be extremely challenging to pre-script otherwise.

There’s still some work to do before DeepMind-powered robots make it to the RoboCup. For these initial tests, researchers completely relied on simulation-based deep RL training before transferring that information to physical robots. In the future, engineers want to combine both virtual and real-time reinforcement training for their bots. They also hope to scale up their robots, but that will require much more experimentation and fine-tuning.

The team believes that utilizing similar deep RL approaches for soccer, as well as many other tasks, could further improve bipedal robots movements and real-time adaptation capabilities. Still, it’s unlikely you’ll need to worry about DeepMind humanoid robots on full-sized soccer fields—or in the labor market—just yet. At the same time, given their continuous improvements, it’s probably not a bad idea to get ready to blow the whistle on them.